Documentation Index

Fetch the complete documentation index at: https://docs.onyx.app/llms.txt

Use this file to discover all available pages before exploring further.

Guide

Configure Onyx to use models served by LM Studio. Onyx has a built-in integration with LM Studio that auto-discovers your loaded models, including their capabilities (vision, reasoning) and context length.Setup LM Studio and Load Your Models

Download LM Studio from lmstudio.ai and load the models you want to use.Start the LM Studio local server:LM Studio runs on port

1234 by default.If LM Studio is running on a different machine than Onyx,

make sure the server is accessible from the Onyx host (e.g., http://<lm-studio-host>:1234).Navigate to Language Models

Access the Admin Panel from your user profile icon, then navigate to Configuration → Language Models.

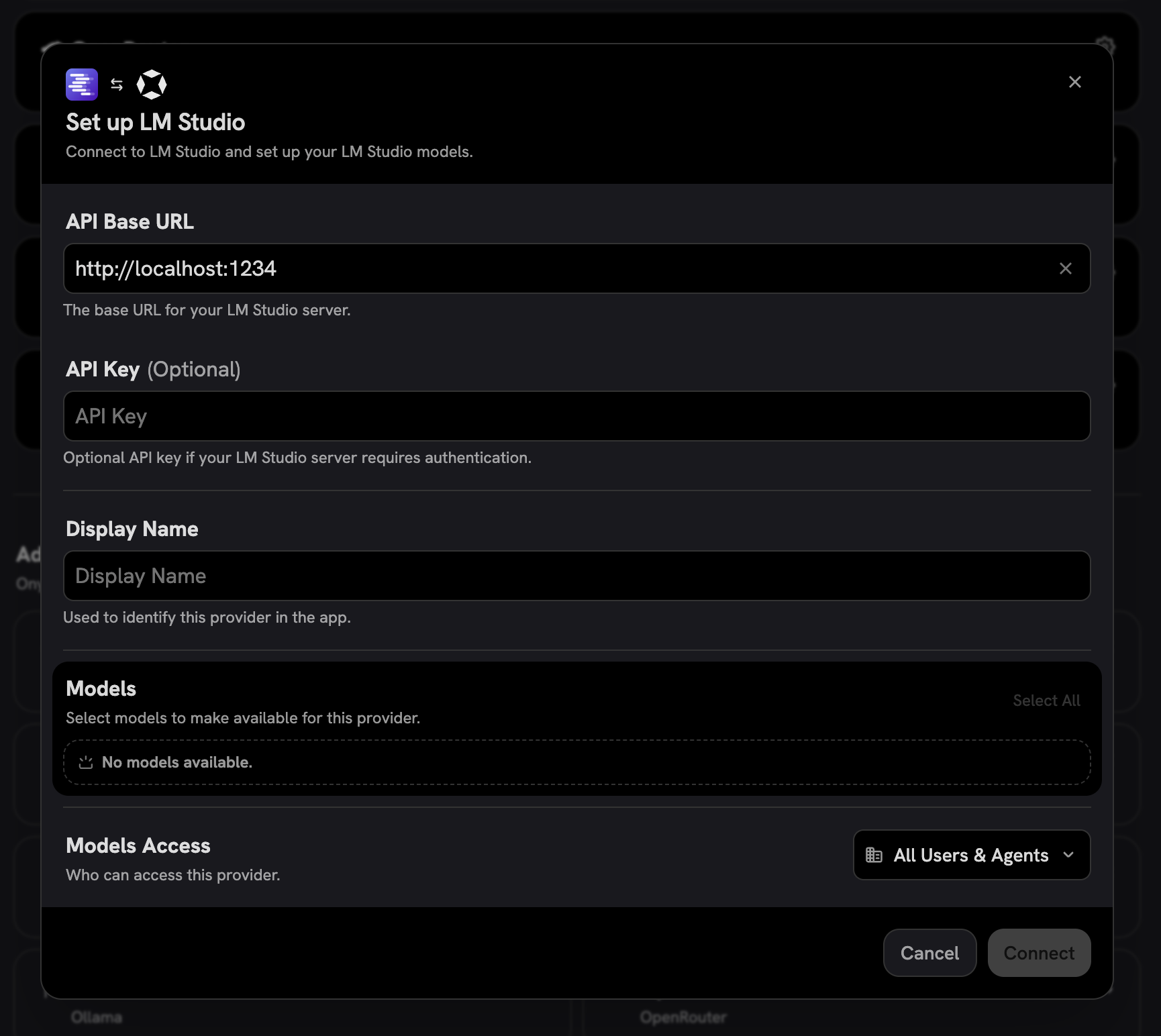

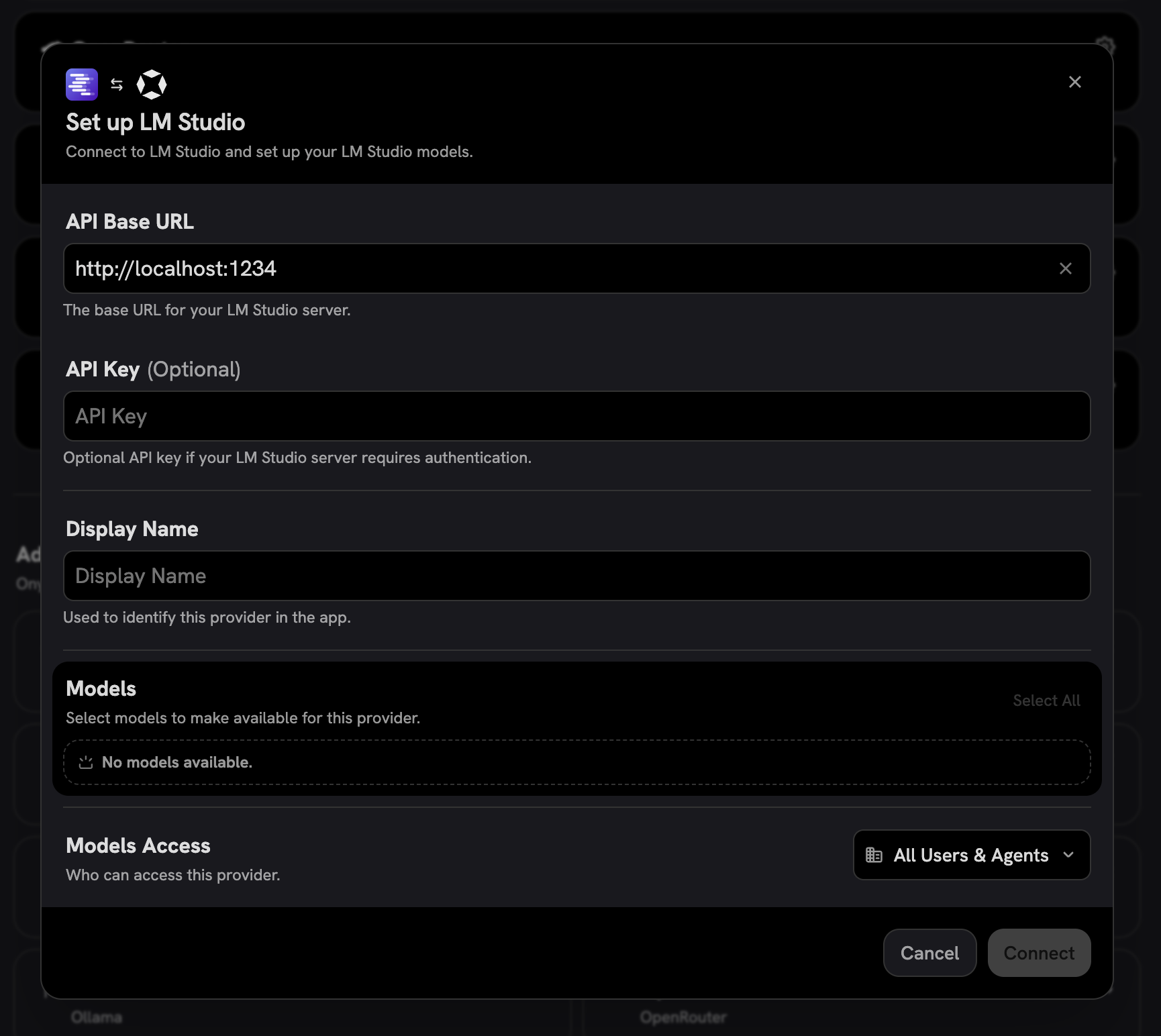

Configure LM Studio

Select LM Studio from the available providers.Give your provider a Display Name.Set the API Base URL to your LM Studio server address (e.g.,

http://localhost:1234).Onyx will automatically connect and discover your loaded models.

Choose Visible Models

In the Advanced Options, you will see a list of all models available from this provider.

You may choose which models are visible to your users in Onyx.Setting visible models is useful when a provider publishes multiple models and versions of the same model.